TechSource Systems is MathWorks Authorised Reseller and Training Partner

Learn how to develop and verify automated driving perception algorithms in MATLAB

An automated driving system primarily consists of four main components: perception, localization, planning and control. Automated Driving ToolboxTM focuses on perception which consists of all the low level sensor processing such as camera, radar, and lidars to produce high level outputs such as object detections, lane detections, etc. Some of common problems that is tackled in automated driving such as how to visualize vehicle data, how to detect lanes and vehicles, how to fuse detections from multiple sensors.

This two-day course provides hands-on experience with developing and verifying automated driving perception algorithms. Examples and exercises demonstrate the use of appropriate MATLAB® and Automated Driving Toolbox™ functionality. Topics include:

Engineers who develop automated driving systems.

MATLAB Fundamentals or equivalent experience using MATLAB. Image Processing with MATLAB, Computer Vision with MATLAB and basic knowledge of image processing and computer vision concepts. Deep Learning with MATLAB is recommended.

Upon the completion of the course, the participants will be able to:

TechSource Systems is MathWorks Authorised Reseller and Training Partner

Objective: Label ground truth data in a video or sequence of images interactively. Automate the labeling with detection and tracking algorithms.

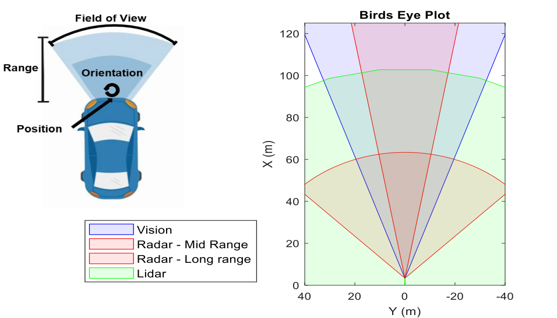

Objective: Visualize camera frames, radar, and lidar detections. Use appropriate coordinate systems to transform image coordinates to vehicle coordinates and vice versa.

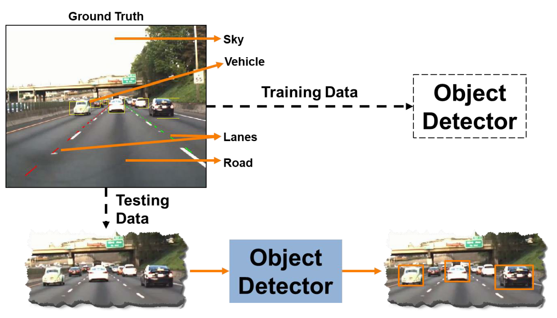

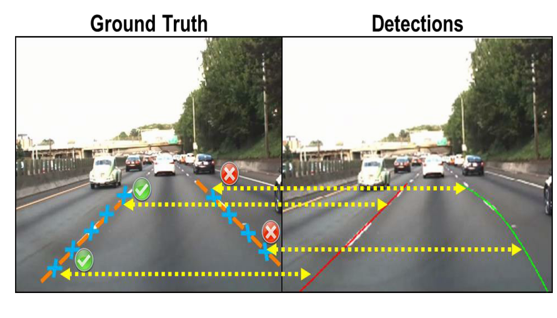

Objective: Segment and model parabolic lane boundaries. Use pretrained object detectors to detect vehicles.

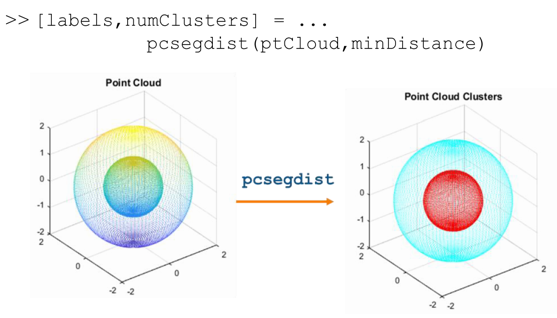

Objective: Work with lidar data stored as 3-D point clouds. Import, visualize, and process point clouds by segmenting them into clusters. Register point clouds to align and build an accumulated point cloud map.

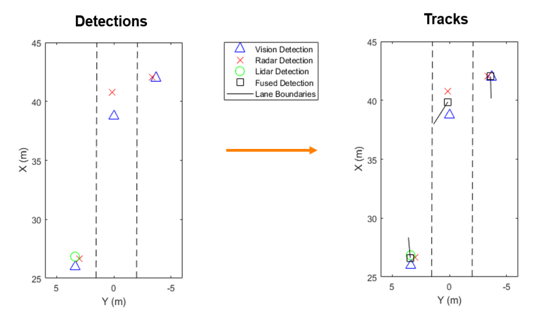

Objective: Create a multi-object tracker to fuse information from multiple sensors such as camera, radar and lidar.

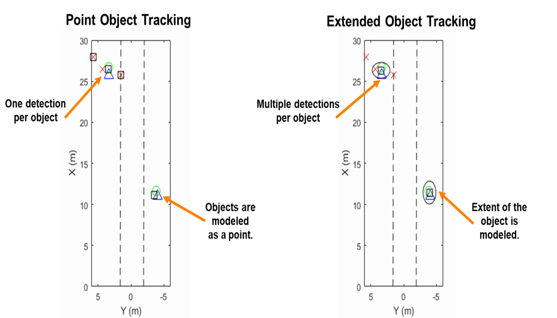

Objective: Create a probability hypothesis density tracker to track extended objects and estimate their spatial extent.

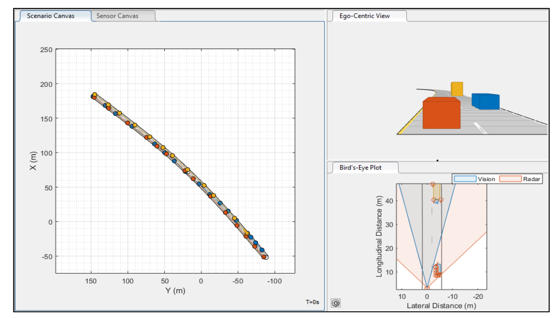

Objective: Create driving scenarios and synthetic radar and camera sensor detections interactively to test automated driving perception algorithms.