TechSource Systems is MathWorks Authorised Reseller and Training Partner

Learn to how to utilize the advanced MAC intrinsics, AI Engine library for faster development and advanced features in adaptive data flow (ADF) graph implementation.

This course describes the system design flow and interfaces that can be used for data movements in the Versal® AI Engine. It also demonstrates how to utilize the advanced MAC intrinsics, AI Engine library for faster development and advanced features in adaptive data flow (ADF) graph implementation, such as using streams, cascade stream, buffer location constraints, run-time parameterization and APIs to update and/read run-time parameters. The emphasis of this two days course is on:

Software and hardware developers, system architects, and anyone who needs to accelerate their software

applications using Xilinx devices

After completing this comprehensive training, you will have the

necessary skills to:

TechSource Systems is MathWorks Authorised Reseller and Training Partner

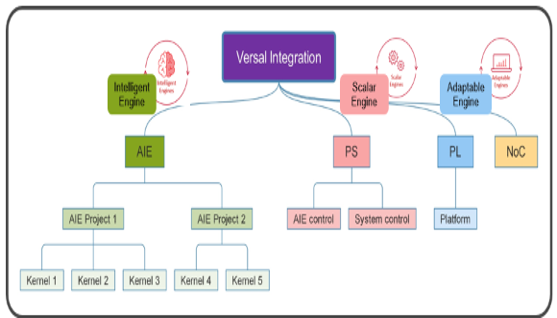

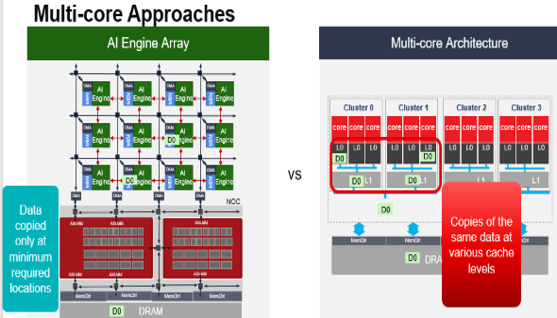

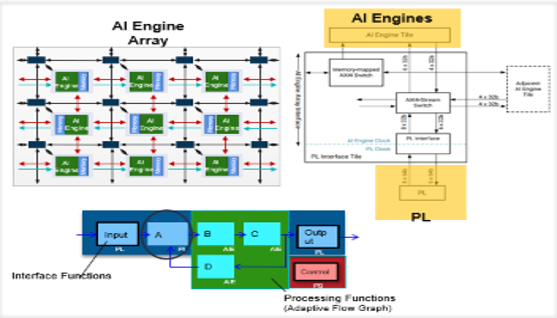

Objective: Covers what application partitioning is and how an application can be accelerated by using various compute engines in the Versal ACAP. Also describes how different models of computation (sequential, concurrent, and functional) can be mapped to the Versal ACAP.

Objective: Explains how image and video processing can be targeted for the Versal ACAP by utilizing the different engines (Scalar Engine, Adaptable Engine, and Intelligent Engine). Also describes the AI engine development flow.

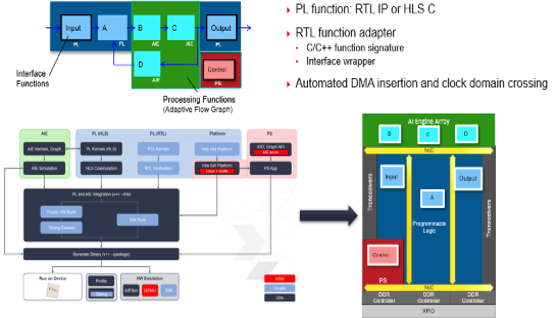

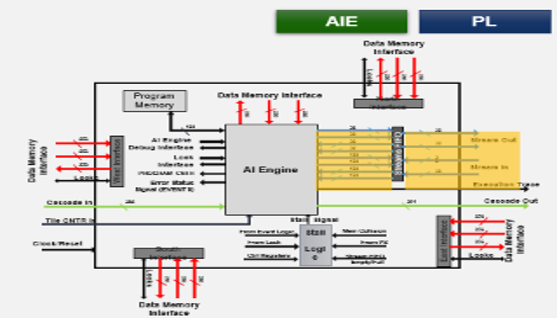

Objective: Describes the implementation of AI Engine and programmable logic (PL) kernels and how to implement the functions in the AI Engine that take advantage of low power.

Objective: Describes the programming model for the implementation of stream interfaces for the AI Engine kernels and PL kernels. Lists the stream data types that are supported by AI Engine and PL kernels.

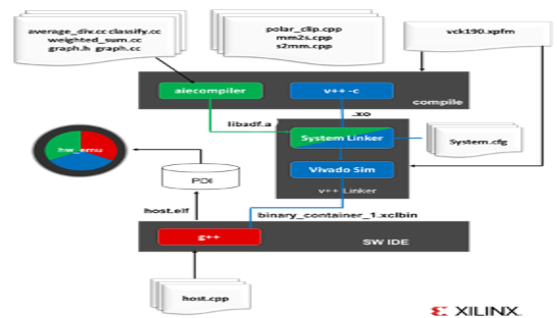

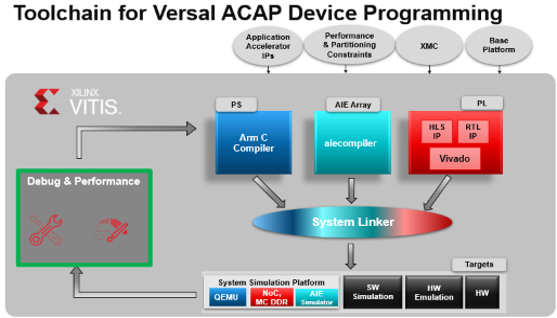

Objective: Demonstrates the Vitis compiler flow to integrate a compiled AI Engine design graph (libadf.a) with additional kernels implemented in the PL region of the device (including HLS and RTL kernels) and link them for use on a target platform. You can call then these compiled hardware functions from a host program running in the Arm® processor in the Versal device or on an external x86 processor

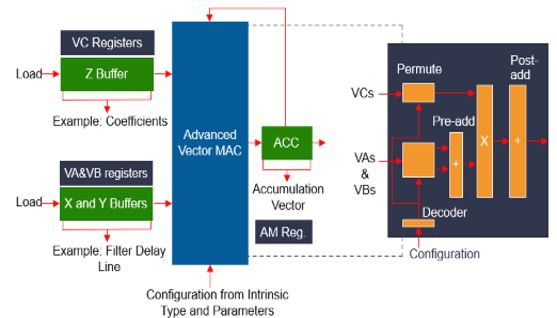

Objective: Describes the Versal AI Engine APIs for arithmetic, comparison, and reduction operations. For advanced users, describes how to implement filters using advanced intrinsics functions for various filters, such as non-symmetric FIRs, symmetric FIRs, or half-band decimators.

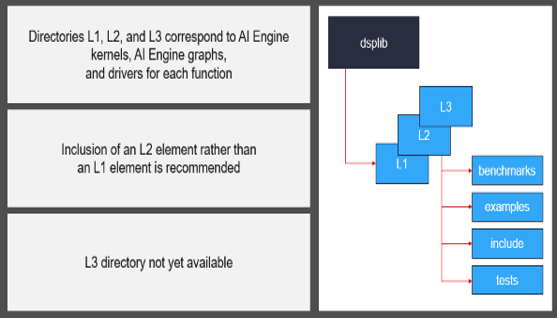

Objective: Provides an overview of the available DSP library, which enables faster development and comes with ready-to-use example designs that help with using the library and tools.

Objective: Learn advanced features such as using initialization functions, writing directly using streams from the AI Engine, cascade stream, core location constraints, and buffer location constraints.

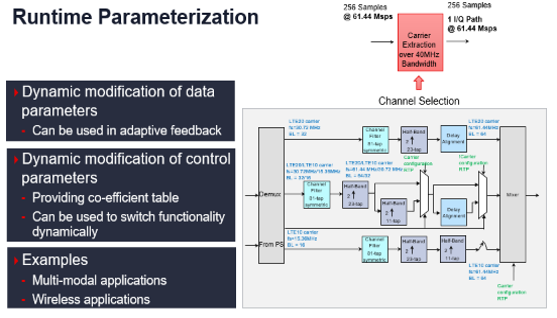

Objective: Describes how to implement runtime parameterization, which can be used as adaptive feedback and to switch functionality dynamically.

Objective: Shows to how to debug the AI Engine application running on the Linux OS and how to debug via hardware emulation that allows simulation of the application.